In the AI field, new large language models are being launched every day and things are changing at a breakneck pace. In just a few months of development, we can now run a ChatGPT-like LLM on our PC offline. Not just that, we can train an AI chatbot and create a personalized AI assistant. But what has intrigued me recently is Microsoft’s hands-on approach to AI development. Microsoft is currently working on an advanced form of AI system called JARVIS (an obvious reference to Marvel’s Iron Man) that connects to multiple AI models and responds with a final result. Its demo is hosted on Huggingface and anyone can check out JARVIS’s capabilities right now. So if you’re interested, go ahead and learn how to use Microsoft JARVIS (HuggingGPT) right away.

What is Microsoft JARVIS (HuggingGPT)?

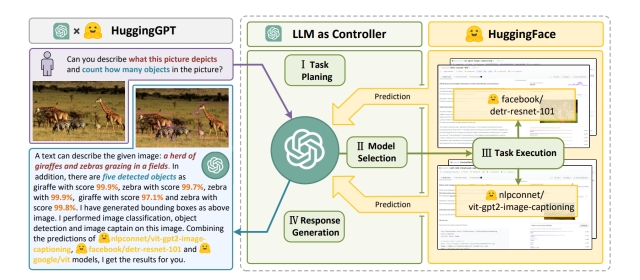

Microsoft has developed a kind of unique collaborative system where multiple AI models can be used to achieve a given task. And in all of this, ChatGPT acts as the controller of the task. The project is called JARVIS on GitHub (visit), and it’s now available on Huggingface (hence called HuggingGPT) for people to try it out. In our testing, it worked wonderfully well with texts, images, audio, and even videos.

It works similarly to how OpenAI demonstrated GPT 4’s multimodal capabilities with texts and images. However, JARVIS takes it one step further and integrates various open-source LLMs for images, videos, audio, and more. The best part here is that it can also connect to the internet and access files. For example, you can enter a URL from a website and ask questions about it. That’s pretty cool, right?

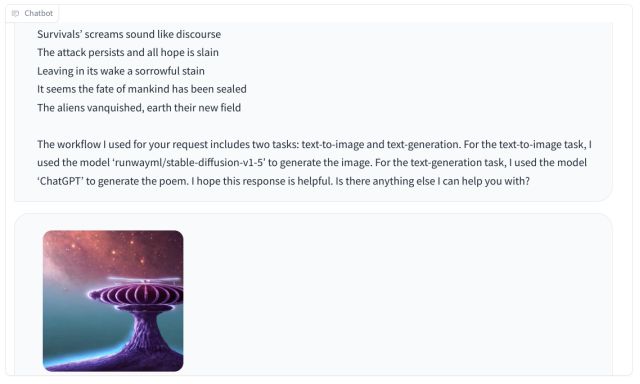

You can add multiple tasks in a single query. For example, you can ask it to generate an image of an alien invasion and write poetry about it. Here, ChatGPT analyzes the request and plans the task. After that, ChatGPT selects the correct model (hosted on Huggingface) to achieve the task. The selected model completes the task and returns the result to ChatGPT.

Finally, ChatGPT generates the response using inference results from all the models. For this task, JARVIS used the Stable Diffusion 1.5 model to generate the image and used ChatGPT itself to write a poem.

There are as many as 20 models linked to JARVIS (HuggingGPT). Some of them are t5-base, stable-diffusion 1.5, bert, Facebook’s bart-large-cnn, Intel’s dpt-large, and more. To sum up, if you want multimodal capabilities right now, go ahead and check out Microsoft JARVIS right away. We have explained how to set it up and test it out right now here:

Step 1: Get the Keys to Use Microsoft JARVIS

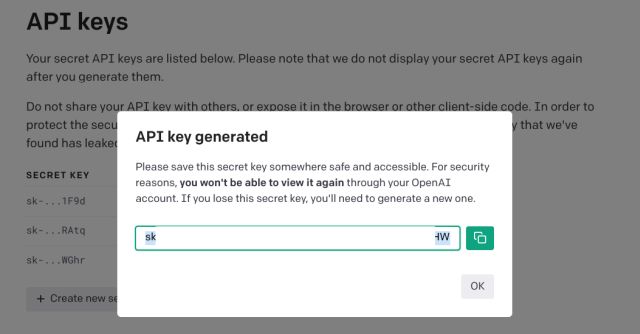

1. First, head to this link, log into your OpenAI account, and click on “Create new secret key” to get your OpenAI API key. Save the key in a Notepad file for later use.

2. Next, open the huggingface.co website (visit) and create a free account.

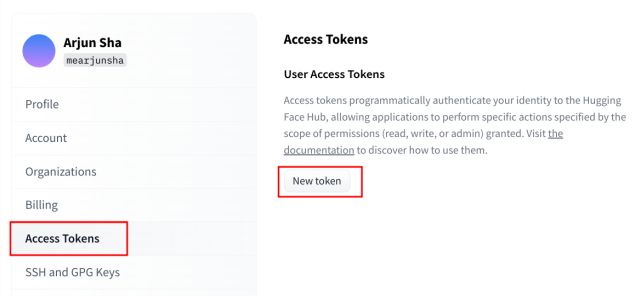

3. After that, click on this link to generate your Hugging Face token. Click on “New token” in the right pane.

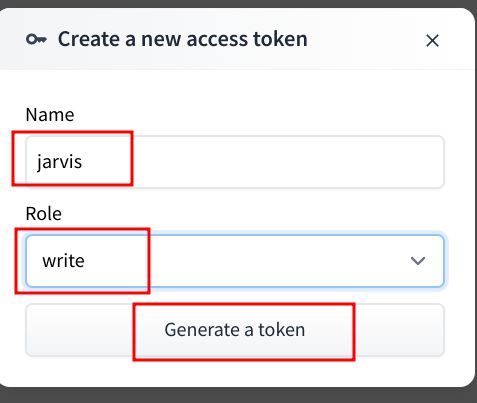

4. Here, enter a name (for example, I have entered the name “jarvis”). Then, change the Role to “Write” and then click on “Generate a token“.

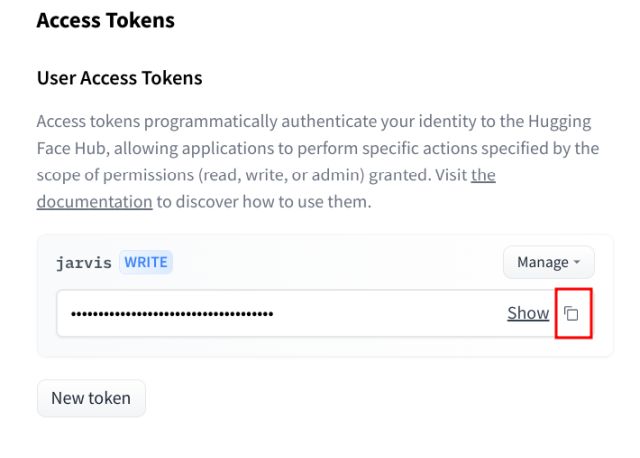

5. Next, click the “copy” button, and the token will be copied to the clipboard. Save the token to a Notepad file.

Step 2: Start Using Microsoft JARVIS (HuggingGPT)

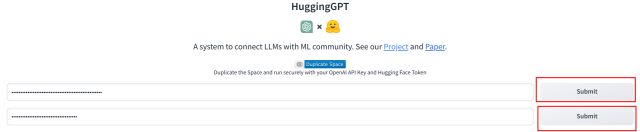

1. To use Microsoft JARVIS, open this link and paste the OpenAI API key in the first field. After that, click on “Submit”. Similarly, paste the Huggingface token in the second field and click “Submit.”

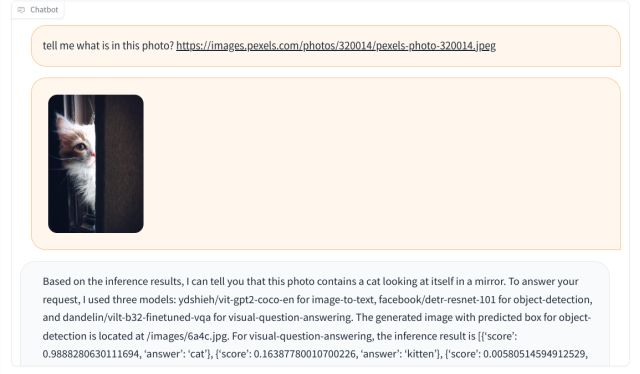

2. Once both tokens are validated, scroll down and enter your query. To get started, I asked JARVIS what the photo was about and shared a URL of the image.

3. It automatically downloaded the image and used three AI models for the task, including ydshieh/vit-gpt2-coco-en (to convert image to text), facebook/ detr-resnet-101 (for object-detection), and dandelin/ vilt-b32-finetuned-vqa (for visual-question-answering). Finally, it concluded that the photo contains a cat looking at itself in the mirror. Isn’t that amazing?

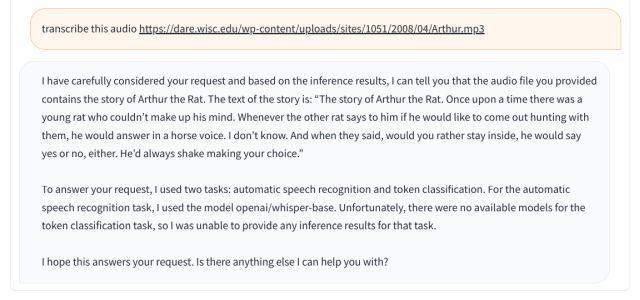

4. In another example, I asked it to transcribe an audio file, and it did so using the OpenAI/whisper-base model. There are many use cases of JARVIS, and you can try them out on HuggingFace for free.

Use Multiple AI Models At Once With HuggingGPT

So this is how you can use HuggingGPT to complete a task using different AI models. I tested JARVIS multiple times, and it worked pretty well, except you need to get behind the queue pretty often. You can’t run JARVIS locally on any half-decent PC, as it requires at least 16GB of VRAM and around 300GB of space for various models.

On Huggingface too, you can’t clone it and skip the queue under the free account. You need to subscribe to run the powerful model on an Nvidia A10G – a large GPU that costs $3.15/hour. Anyway, that is all from us. If you want to use CodeGPT in VS Code for assistance while programming, head to our linked guide. And to use ChatGPT 4 for free, we have a list ready for you. Finally, if you have any questions, let us know in the comment section below.