As part of its continued efforts to keep its platform free from smut and graphic violence, YouTube says it took down over 8 million videos during the fourth quarter of 2017, with 6.7 million of them being originally flagged by the company’s content moderator AI. Of those 6.7 million, the company says as much as 76 percent were removed before they could get even a single view.

“The majority of these 8 million videos were mostly spam or people attempting to upload adult content – and represent a fraction of a percent of YouTube’s total views during this time period”

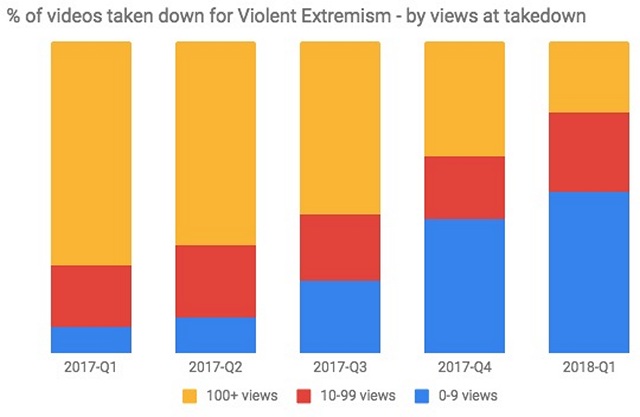

The company says it introduced machine learning to flag inappropriate content on its platform last June, and since then, the amount of videos flagged by the software has gone up exponentially. What has also improved, is the time it now takes to carry out these deletions, because more than half of the violent videos removed these days garner less than 10 views before facing the axe.

Compared to that, just 8 percent of the videos flagged and removed for violent extremism before the introduction of AI algorithm were taken down with fewer than 10 views. “Our advances in machine learning enabled us to take down nearly 70 percent of violent extremism content within 8 hours of upload and nearly half of it in 2 hours”, the report said.

The info comes by way of YouTube’s first-ever Community Guidelines Enforcement Report that further detailed some of the related steps being taken by the company to combat inappropriate content on the site. That includes employing over 10,000 people around the world to sort through videos to delete all disturbing content. It also includes joining hands with more than 150 academics, government partners, and NGOs who add societal and technological expertise to the company’s enforcement systems.