Google’s latest version of Google Photos app for iOS brings a depth-editing feature, which means you can now pull up any portrait image that you’ve taken on your iPhone, and adjust the background blur and focus with just a few steps.

Well, I’ve been taking a lot of photos on my iPhone XR lately. While iOS’ Photos app gets the job done for me, I was pretty pumped to check out what Google Photos could do, especially after seeing all the camera tricks it can do on the Pixel 3.

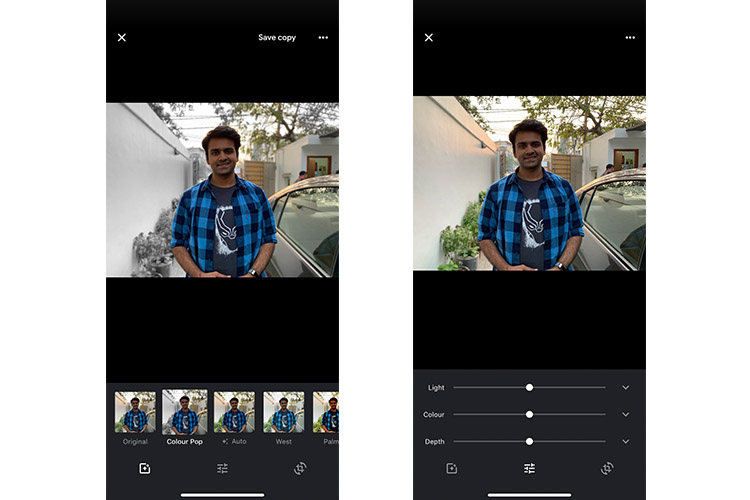

I updated the Google Photos on my iPhone, and decided to head out to capture some portrait photos. As you can see, Google Photos, in addition to the depth-editing feature, has also gained the Color Pop feature which we saw on the Pixel 3. It is, however, worth pointing out that you’ll have to capture a Portrait photo in order of any of these new features to work.

Surprising Results

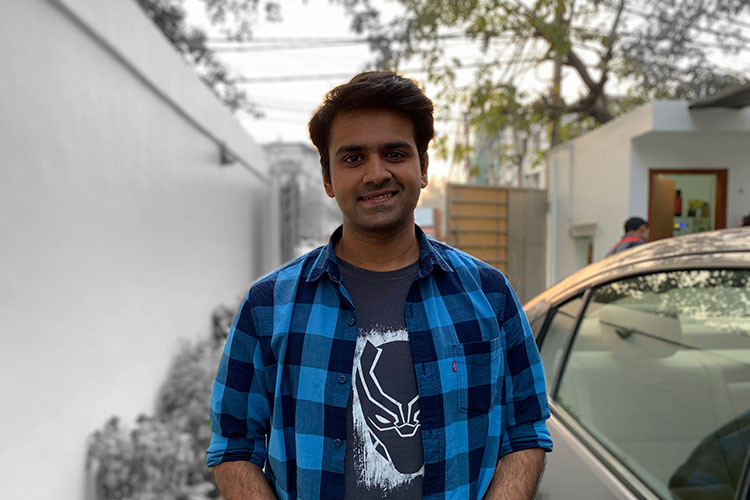

For the sake of comparison, I opened the same photo on both Google Photos and iOS’ Photos app. Honestly, I wasn’t expecting any one app to perform better than the other. If anything, I was expecting Google Photos app to produce better images. But wait till you see the results.

The Color Pop feature, as you can see, messed up the photo. Google Photos failed to detect the edges, and applied the effect only on one side, whereas the cars, trees, etc. on my right remained untouched.

Moving on to the depth-editing feature, the results were the same, sadly. In the photos below, I cranked the depth slider all the way up, and still the background, particularly on my right, is still very much visible. iOS’s Photos app, on the other hand, did a commendable job.

After scratching my head for a few minutes, I realized that Google Photos was not able to recognize the subject. Thankfully, you can adjust it by tapping the subject or background, depending on what you want to be blurred out in your image. I noticed the results to be comparatively better when you tap on the subject. Yes, it works, but it takes an extra step which can be avoided if you use iOS’s native Photos app directly. Even then, Google Photos messed up with edge detection. See this –

Here’s another image, to show how consistently Google Photos app failed on me. In the gallery below, Google Photos failed to detect the edge around the cup? It looks way better on the image edited on iOS’ Photos app. Yes, it’s not flawless, but it looks better than the one produced by Google Photos, in my opinion.

Have you updated your Google Photos app on iOS? If yes, then be sure to leave a comment detailing your experience with the app. I’d love to know your opinion.