Google’s annual developer conference started May 8 at the Shoreline Amphitheater in Mountain View, California. While we all expected a bunch of new stuff from Google, including (obviously) new Android P features, the company went ahead and announced a slew of AI and machine learning based stuff that can only be described as mind-blowing. I could round-up the keynote, but instead, I invite you to think just where Google has reached with its investments in voice, text-to-speech, artificial intelligence, and machine learning. I also invite you to think just how far this “search giant” has come as compared to its biggest rival – Apple.

Let’s compare and contrast the things Google showed off, versus their Apple counterparts.

Google Assistant

When Sundar Pichai started talking about Google Assistant, I fully expected to hear the things he said about Assistant becoming smarter and more natural. After all, everyone is working towards that very goal in smart assistants. He announced 6 new voices. Everything was great, but expected… and then he dropped Google Duplex.

Hearing Google Assistant talk to a Hair Salon, in a completely natural, even human way was the first indication towards the fact that Google had set its sights perfectly when it started investing in AI, machine learning, it’s very own Tensor Processing Units, and so much more.

<iframe src=”https://www.facebook.com/plugins/video.php?href=https%3A%2F%2Fwww.facebook.com%2Fbeebomco%2Fvideos%2F1765337180213565%2F&show_text=0&width=476″ width=”476″ height=”476″ style=”border:none;overflow:hidden” scrolling=”no” frameborder=”0″ allowTransparency=”true” allowFullScreen=”true”></iframe>

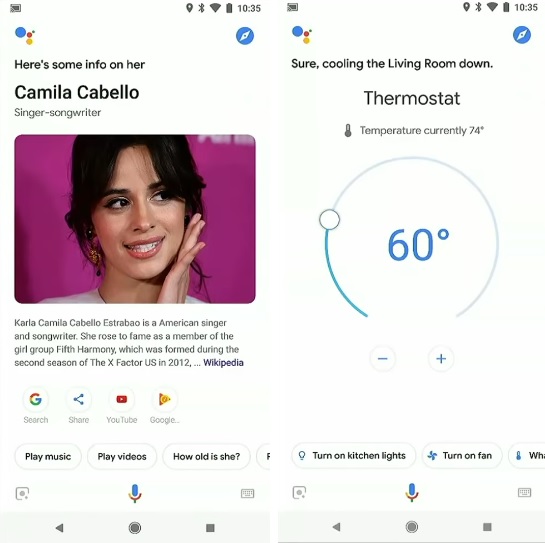

But they didn’t stop there, Google Assistant is also getting new visual upgrades, making better use of the screen real-estate available to it. It’ll respond with positive reinforcement to polite commands (Google calls it ‘Pretty Please’), and it’s also competing with Alexa with follow-up requests rolling out soon. Plus it’ll be able to handle multiple queries stitched together in complicated ways.

In contrast, Apple’s Siri is miles behind the Assistant. Forget about making calls to real humans, Siri, for the most part, doesn’t understand speech all that well. It fails to respond correctly to queries, it sometimes gets foul-mouthed, it brings up unexpected responses more often than not. It was actually quite hilarious, the way Siri fails so often (there’s an entire sub-reddit for it!), but it’s not funny anymore. The only thing Siri reliably does, is set alarms.

The only thing Siri reliably does, is set alarms.

Google Lens

Google Lens – the company’s computer-vision aided feature is coming to more and more phones this year. Even third-party manufacturers that usually don’t get Google features very early have teamed up with them to bring Google Lens (and other stuff) to their smartphones. Google Lens is getting the ability to copy text from the real world – a feature that, even though I’ll not be using it that often, is still really cool to see in action. It will also be integrated with the camera app on a number of phones (sort of like Bixby Vision in Samsung phones, but better) and will bring up real-time results into our camera’s view-finder!

It may not sound all that exciting, but this sudden increase in the features, functionality, and support for Google Lens is a good pointer for the things yet to come. Android, both as an operating system, and an ecosystem, looks like it’s finally starting to come together.

this sudden increase in the features, functionality, and support for Google Lens is a good pointer for the things yet to come.

I’d compare Google Lens to something from Apple, but they don’t have a product like it, and I doubt Cupertino is going to pay heed now. If they have the time, I’d really like it invested in bringing Siri up-to standards.

Gmail

Gmail’s new ‘Smart Compose‘ feature, which is yet to roll out, is another incredible way the company is showing off it’s machine learning, and AI capabilities. Smart compose is going to take the ‘predictive words’ a step further, and predict entire phrases. All thanks to the massive amounts of data Google has accumulated over the years, and the company’s early investments in AI.

Apple Mail, on the other hand, is still the same Apple Mail, and even though Apple does update it and adds new features to it, it remains eons behind Gmail.

Google Maps

The same story repeats itself with Apple Maps, which is more-or-less a wasteland of poor navigation, and less-than-detailed information about locations. Meanwhile, Google Maps is getting new features like ‘Your Match’, and the company is even working on an AR-based navigation feature for walking which looks awesome.

The same story repeats itself with Apple Maps, which is more-or-less a wasteland of poor navigation, and less-than-detailed information about locations

Google has had the fundamentals of Maps down for a long time now, so it’s time to add new features, and in the case of AR-navigation, I’d say it’s time to let loose and go crazy.

Google Photos

To be honest, when Sundar Pichai started talking about those ‘suggested actions’ in Google Photos, I was pretty underwhelmed. I mean, sure, so Google Photos can suggest sharing options based on people it sees in the photos, nice. However, I heard myself audibly gasp when Google Photos took a picture of a document, not even a straight picture, an angled picture, straightened it out, and converted it into PDF. I mean, for heaven’s sake, that won me over. I’m done, and I’m going to marvel over that for the next few days at least.

Plus, Google Photos can now not only make edits on its own, it can even colorize black and white photos (although that’s something I’m not fully convinced will work well enough), but hey, if they can fix my stupidly angled photo of a document, I’m sure colorizing photos is no big deal.

Android

I know, I could’ve started off with Android, but I wanted to draw attention to the fact that Google isn’t ahead of Apple solely because of Android and the new features it’s getting this time around. In fact, in a lot of ways, iOS is amazing too, but the iOS experience has, of late, become more tiring and cumbersome than what iOS used to be. But that’s a sob-story for a later time; right now, we’re talking about Android P, and iOS 11 (and, by extension iOS 12).

the iOS experience has, of late, become more tiring and cumbersome than what iOS used to be

Starting off with the very basics, Android P will begin incorporating AI everywhere, and Google is starting to use on-device machine learning. Now, Apple has been using on-device machine learning for quite some time now, but honestly, where are they using it? Photos.

Google has instead opted to making the Android experience better with machine learning. There’s App Actions, Slices, Adaptive Brightness, and Adaptive Battery – all things that will learn from the user and start making the experience better over time. There’s a context-aware navigation-bar that brings up buttons depending on when you might actually need them.

The only thing where I have some minor issues with Android P is gestures. Google has gone ahead and basically ripped gestures off of the iPhone X, and honestly, that’s not that bad. However, Google’s implementation is a tad, well, annoying. Double swipe-up for the App Drawer is plain wrong, and even scrubbing the ‘Home Pill’ to switch between apps doesn’t work as well as I remember it on the iPhone X.

SEE ALSO: 22 New Android P Features You Should Know

Google I/O Sounds Like a Battle Cry, and Apple Needs to Rally Its Troops

When Google first started dabbling in things like computer vision, artificial intelligence, and a lot of other stuff we don’t fully understand, their products (like Google Goggles) were really quite… well, underwhelming. This year, however, Google has shown us all just why it was so deeply invested in those technologies. The company has taken a giant leap with AI and ML, and honestly, Apple really needs to rethink it’s strategy of just how long it can turn up profits based solely on the fact that the iPhone’s hardware is unparalleled, and that its devices play so well together.